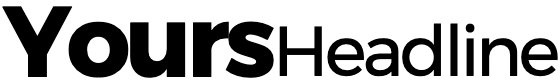

Ann Johnson was just 30 years old when she experienced a stroke in 2005 that left her paralyzed and unable to speak. At the time, she was a math and P.E. teacher at Luther College in Regina, had an eight-year-old stepson and had recently welcomed a baby girl into the world.

“Overnight, everything was taken from me,” she wrote, according to a post from Luther College.

The stroke left her with locked-in syndrome (LIS), a rare neurological disorder that can cause full paralysis except for the muscles that control eye movement, the National Institutes of Health writes.

Johnson, now 47, described her experience with LIS in a paper she wrote for a psychology class in 2020, typed letter by letter.

“You’re fully cognizant, you have full sensation, all five senses work, but you are locked inside a body where no muscles work,” she wrote. “I learned to breathe on my own again, I now have full neck movement, my laugh returned, I can cry and read and over the years my smile has returned, and I am able to wink and say a few words.”

A year later, in 2021, Johnson learned of a research study that had the potential to change her life. She was selected as one of eight participants for the clinical trial, offered by the departments of neurology and neurosurgery at the University of California, San Francisco (UCSF), and was the only Canadian.

“I always knew that my injury was rare, and living in Regina was remote. My kids were young when my stroke happened, and I knew participating in a study would mean leaving them. So, I waited until this summer to volunteer – my kids are now 25 and 17,” she writes.

Now, the results of Johnson’s work with a team of U.S. neurologists and computer scientists have come to fruition.

A study published in Nature on Wednesday revealed that Johnson is the first person in the world to speak out loud via decoded brain signals.

An implant that rests on her brain records her neurological activity while an artificial intelligence (AI) model translates those signals into words. In real time, that decoded text is synthesized into speech, spoken aloud by a digital avatar that can even generate Johnson’s facial expressions.

Ann Johnson sits in front of a digital avatar, through which she can speak out loud via a brain-compter interface.

Noah Berger/UCSF

The system can translate Johnson’s brain activity into text at a rate of nearly 80 words per minute, much faster than the 14 words per minute she can achieve typing out words with her current communication device, which tracks her eye movements. The AI decoder was accurate 75 per cent of the time in translating words, the researcher found.

The breakthrough was demonstrated in a video released by UCSF, in which Johnson speaks to her husband for the first time using her own voice, which the AI model can mimic thanks to a recording of Johnson taken on her wedding day.

“How are you feeling about the Blue Jays today?” her husband Bill asks, wearing a cap from the Toronto baseball team.

“Anything is possible,” she responds via the avatar.

Johnson’s husband jokes that she doesn’t seem very confident in the Jays.

“You are right about that,” she says, turning to look at him, smiling.

Ann Johnson working with researchers on a technology that allows her to speak via brain signals after a stroke left her paralyzed.

Noah Berger/UCSF

The research team behind the system, a technology known as a brain-computer interface, hopes it can secure approval from U.S. regulators to make this system accessible to the public.

“Our goal is to restore a full, embodied way of communicating, which is the most natural way for us to talk with others,” says Edward Chang, chair of neurological surgery at UCSF and one of the lead authors of the study. “These advancements bring us much closer to making this a real solution for patients.”

So, how did they do it?

The team surgically implanted a paper-thin grid of 253 electrodes onto the surface of Johnson’s brain, covering the areas that are important for speech. The researchers then asked Johnson to attempt to silently speak sentences.

“The electrodes intercepted the brain signals that, if not for the stroke, would have gone to muscles in Ann’s lips, tongue, jaw and larynx, as well as her face,” a news release from UCSF reads.

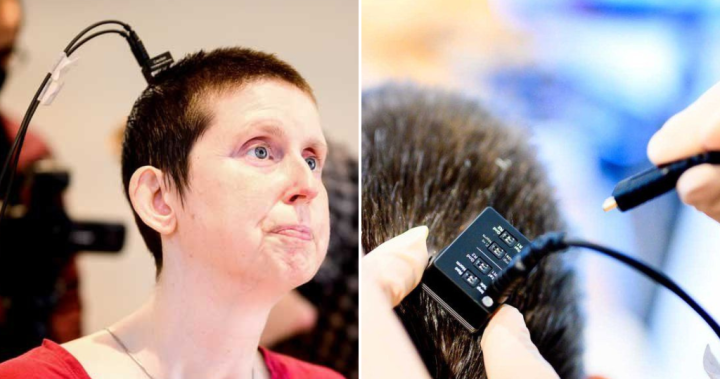

Those brain signals get transferred into a port that is screwed onto the outside of Johnson’s head. From there, a cable that plugs into the port can be hooked up to a bank of computers that decode the signals into text and synthesize the text into speech.

Animation of the brain implant Ann Johnson received that allows her to speak via a digital avatar.

Noah Berger/UCSF

The AI model doesn’t exactly decode Johnson’s thoughts, but interprets how Johnson’s brain would move her face to make sounds — a process that also allows the AI to generate her facial expressions and emotions.

The AI translates these muscle signals into the building blocks of speech: components called phonemes.

“These are the sub-units of speech that form spoken words in the same way that letters form written words. ‘Hello,’ for example, contains four phonemes: ‘HH,’ ‘AH,’ ‘L’ and ‘OW,’” according to the UCSF release.

“Using this approach, the computer only needed to learn 39 phonemes to decipher any word in English. This both enhanced the system’s accuracy and made it three times faster.”

Over the course of a few weeks, Johnson worked with the research team to train the AI to “recognize her unique brain signals for speech.”

They did this by repeating phrases from a bank of 1,024 words over and over again until the AI learned to recognize Johnson’s brain activity associated with each phoneme.

The study states that researchers were able to get the AI model to a level of “high performance with less than two weeks of training.”

“The accuracy, speed and vocabulary are crucial,” said Sean Metzger, one of the graduate students who helped develop the AI model. “It’s what gives Ann the potential, in time, to communicate almost as fast as we do, and to have much more naturalistic and normal conversations.”

Ann Johnson, 47, can speak out loud again for the first time after she was paralyzed in a stroke that happened in 2005.

Noah Berger/UCSF

Johnson is still getting used to hearing her old voice again, generated by the AI. The model was trained on a recording of a speech Johnson gave on her wedding day, allowing her digital avatar to sound similar to how she spoke before the stroke.

“My brain feels funny when it hears my synthesized voice,” she told UCSF. “It’s like hearing an old friend.”

“My daughter was one when I had my injury, it’s like she doesn’t know Ann.… She has no idea what Ann sounds like.”

Her daughter only knows the British-accented voice of her current communication device.

The next steps for the researchers will be to develop a wireless version of the system that wouldn’t require Johnson to be physically hooked up to computers. Currently, she’s wired in with cables that plug into the port on the top of her head.

“Giving people like Ann the ability to freely control their own computers and phones with this technology would have profound effects on their independence and social interactions,” said study co-author David Moses, a professor in neurological surgery.

A researcher plugs a wire into a port that is screwed into Ann Johnson’s head, which is connected to the grid of electrodes resting on her brain.

Noah Berger/UCSF

Johnson says being part of a brain-computer interface study has given her “a sense of purpose.”

“I feel like I am contributing to society. It feels like I have a job again. It’s amazing I have lived this long; this study has allowed me to really live while I’m still alive!”

Johnson was inspired to become a trauma counsellor after hearing about the Humboldt Broncos bus crash that claimed the lives of 16 young hockey players in 2018. With the help of this AI interface, and the freedom and ease of communication it allows, she hopes that dream will soon become a reality.

“I want patients there to see me and know their lives are not over now,” she wrote. “I want to show them that disabilities don’t need to stop us or slow us down.”